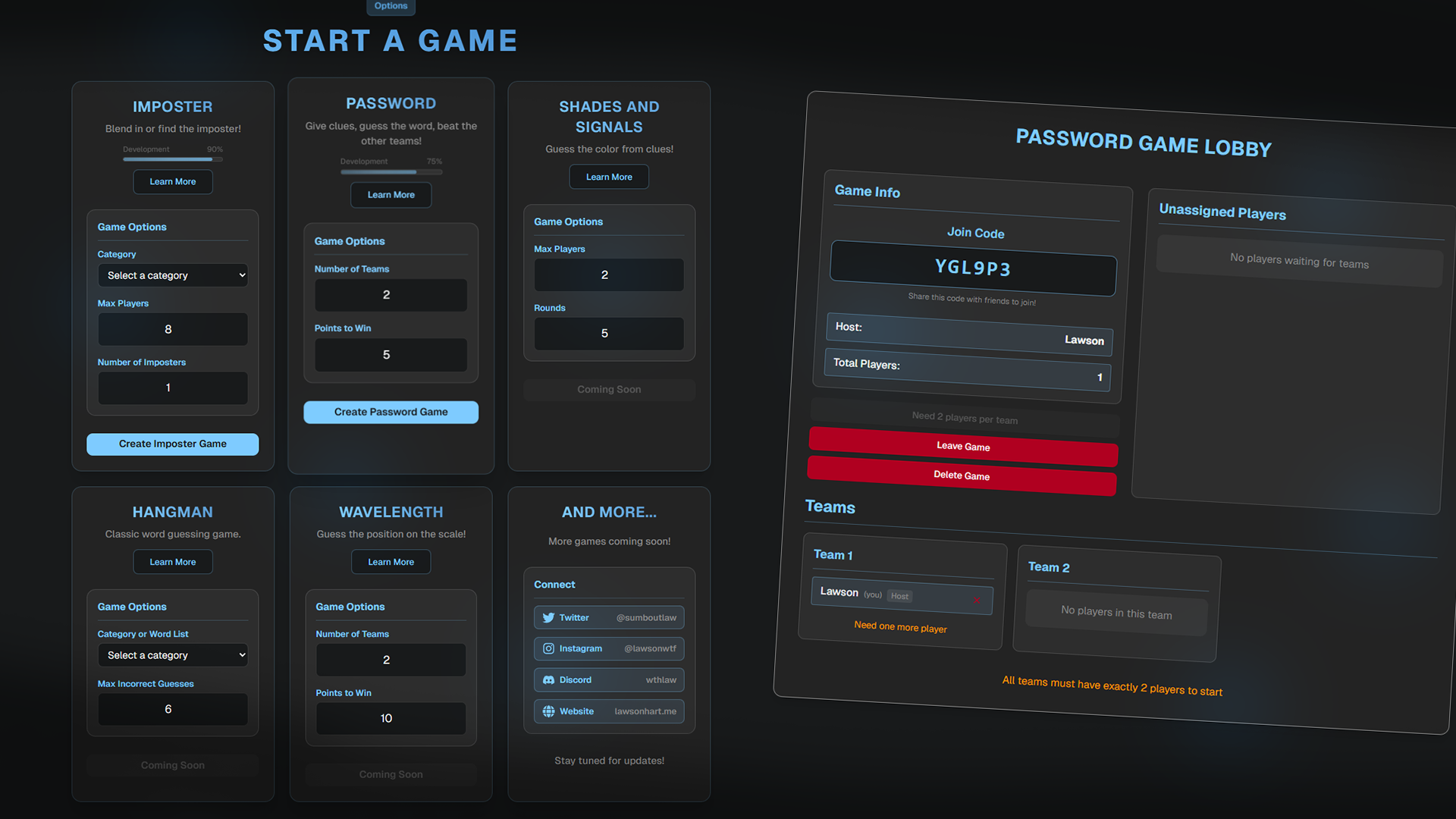

Rebuilding my games app from polling to realtime state sync

Try it live (opens in a new tab)

/ 13 min read

Overview

This post compares two versions of the same product. The archived version lives at github.com/oyuh/games-arch. The current version is the refactor in this repo.

Both versions solve the same product problem. A player creates a room, shares a code, joins fast, and plays party games without an account. The product goal stayed stable. The runtime model did not.

The old version, games-arch, was one Next.js app with route handlers, polling, and a lot of game logic spread across large client pages. The new version, games-refac, splits the system into a React and Vite frontend, a Hono API, a shared contract package, Zero for synchronized query and mutation state, Postgres as the durable store, and a dedicated presence WebSocket.

That sounds like a normal rewrite story. The useful part is not the tool list. The useful part is why the rewrite became necessary, what improved, and what now costs more to run.

What the old version did well

Before talking about the refactor, it is worth being fair to games-arch. It was not a bad app. It shipped real game logic and solved a real use case. The architecture was direct. A Next page rendered. Client code called fetch('/api/...'). Route handlers read or mutated Postgres rows. The page polled every few seconds to stay current.

That approach had one big advantage. It was easy to understand at first. There was no separate API service, no separate sync service, no shared contract package, and no extra deployment surface for presence. When the app was smaller, that simplicity helped me ship fast.

The old strengths

The strengths were practical. Everything lived close together. Adding a new game flow often meant adding a page and a few API routes. Deployment complexity was lower. The mental model matched what many people already know from Next App Router. Most of all, the old version let me prove the product before I invested in heavier architecture.

That part matters. Starting with the new stack from day one would have slowed the project before the product need was even clear.

Where the old architecture started to hurt

The weaknesses showed up once the app stopped being a few routes and some UI state. Multiplayer games add phases, reconnect rules, cleanup paths, and timing edge cases. At that point the original shape stopped fitting the product.

1. Polling everywhere

This is the largest difference between the two versions. games-arch kept the UI current by repeatedly calling the API. The old Imposter pages polled aggressively. Password pages did the same with different intervals. That kept the app live enough, though it also taxed almost every layer.

Polling adds requests even when nothing changes. It duplicates loading and error logic across pages. It pushes the app toward eventual consistency instead of synchronized state. It also creates more race conditions around redirects, timers, and phase transitions.

Polling feels fine when the state surface is small. It starts to drag once a page needs to answer questions like: did everyone submit, did the phase advance, did someone disconnect, did the game end, did I get kicked, do I redirect now or after one more fetch.

2. The API surface kept expanding per game

The archived repo exposed many route trees. Imposter had create, fetch, start, clue, vote, should-vote, heartbeat, and leave endpoints. Password had its own parallel set of routes for create, join, start, category vote, word selection, clue entry, guesses, round transitions, game end, and leave flows.

None of that is wrong on its own. The problem is what happens over time. Each game invents its own API shape. Shared behavior gets reimplemented in slightly different ways. One rule change touches several endpoints and several pages. Client and server drift unless you stay very disciplined.

The old version optimized for shipping one feature at a time. It did not optimize for consistency once the app grew.

3. Too much state shaping lived in pages and route handlers

The old page components often carried too much. The Imposter page handled poll loops, local loading state, clue and vote submission, disconnect logic, redirects, notifications, leave behavior, heartbeat work, history rendering, and special-case transitions. Password had similar pressure, especially because team-specific and global phases lived together.

Once a page does that much, the file stops being a view and starts acting like a custom controller for the entire game. That makes future UI edits much harder than they should be.

4. Realtime and presence were bolted onto request and response flows

The old app supported multiplayer updates and connection tracking, though the implementation was scattered. Presence depended on polling, heartbeat routes, and game-specific disconnect logic stored inside game data. That meant presence was not a system. It was a repeated concern each game had to solve again.

That feels manageable until you need it to be dependable. Once reconnection and cleanup start to matter, duplicated presence logic becomes a real maintenance problem.

5. Product progress and architectural debt were mixed together

The old repo clearly shows real iteration. There are backwards-compatibility comments, legacy fields kept around for old UI expectations, and route handlers that do transition logic and cleanup work at the same time. That is normal in a fast-moving app. It is also a sign that the architecture is carrying too much historical weight in too many places.

What changed in the refactor

The refactor is not Next.js with cleaner files. It is a different layout and a different runtime model.

The new structure

games-refac splits the repo into apps/web, apps/api, and packages/shared. That one move changes almost everything, because the project now has an explicit contract layer instead of an implied one.

The frontend is only the frontend

The web app now runs on React 19, Vite, and React Router. The frontend renders pages, stores lightweight browser identity, opens realtime connections, subscribes to state, and invokes shared mutators. It does not pretend to be the API runtime anymore.

That split cleaned up the page model. Pages mostly render current state and trigger actions. They do not spend their time orchestrating poll loops and route-specific fetch logic.

The backend is an actual service

The API is now a Hono service on Node. It owns /api/zero/query, /api/zero/mutate, /health, /debug/build-info, /api/cleanup, and the /presence WebSocket upgrade path. That is a much smaller and clearer external surface than one route tree per game mechanic.

The biggest improvement is how actions are modeled. The client invokes named mutators that resolve against shared definitions instead of hitting a public route for each tiny action.

Shared contracts are first-class

The shared package now contains the Drizzle schema, the Zero schema, query definitions, mutator implementations, and shared game types. In the old version, behavior often lived in the relationship between a page and a route handler. In the new version, behavior lives in shared queries and mutators.

That gives the repo a real center of gravity. Contracts are imported instead of re-described. Refactors touch fewer surfaces. It is much easier to find the real source of behavior when a game rule changes.

The biggest upgrade, from polling to synchronized state

This is the real reason the new version is better. games-arch used polling to simulate liveness. games-refac uses Rocicorp Zero to synchronize query and mutation state through a cache layer backed by Postgres.

What changed in practice

The browser now creates one Zero client. Pages subscribe with useQuery(...). Mutations run through shared mutators. Zero forwards the work through the API and sends updated state back through subscriptions.

The main win is not that the app is now realtime. The main win is that the UI no longer fakes realtime by asking the same question over and over. That removes page-specific fetch loops, cuts down on manual refresh state, reduces poll-then-redirect logic, and lowers the amount of edge-case code around stale snapshots.

The mental model changes too. The old model was, fetch the latest game again and hope the UI catches up at the right moment. The new model is, subscribe to the slice of state you care about and mutate the source of truth. That is a much better fit for multiplayer phases and timers.

Presence became a system

The refactor also separated realtime game data from presence. The app now uses a dedicated presence WebSocket at /presence. That channel updates sessions.lastSeen and game attachment state on a heartbeat interval. Presence is no longer hidden inside game-specific heartbeat routes.

That split is cleaner and easier to generalize. Zero handles data synchronization. The presence socket answers a different question, is this browser still alive and in this room. Cleanup and reconnection logic become easier to reason about once session liveness lives in one place.

The data model stayed pragmatic, though it got more consistent

Both versions stay pragmatic about game state. Neither one tries to normalize every clue, vote, and round into a long chain of relational tables. That did not need to change.

What changed is consistency. The new schema has clear tables for sessions, imposter_games, password_games, chain_reaction_games, and chat_messages. Each game table still stores a lot of state in JSON columns, which is often the right fit for phase-shaped party game state.

The key difference is that the shared schema, shared types, and shared mutators now point at the same shape. The project is much more explicit about the data it expects to stay stable.

The frontend got smaller in the right places

The old pages carried a lot of custom orchestration. The new pages still hold multiplayer logic, because that is unavoidable, though the responsibility is narrower. Subscribe to the current game. Subscribe to room sessions. Open the presence socket. Invoke mutators. React to announcements, kick or end state, and timers.

That makes the pages feel less like a mini framework and more like a focused interface over shared state.

Observability improved a lot

This is the kind of change that sounds minor until production breaks. The new version tracks Zero connection state, Zero online and offline transitions, presence socket state, presence connect latency, API probe state, and build metadata from /debug/build-info.

The old version had useful logs, though the new version is much more intentional. Once the system has several moving pieces, you need quick answers to basic questions. Is the browser online. Is Zero connected. Is presence connected. Is the API reachable. Which build is the browser talking to. The refactor answers those questions faster.

Chat shows why the new structure works better

The refactor adds a shared chat model with a chat_messages table, shared chat.byGame queries, shared chat.send mutators, and a reusable ChatWindow component. In the old structure, chat would have meant more route handlers, more refresh logic, more response shapes, and more page-specific glue.

In the new version, chat looks like a shared data concern with a shared contract and a reusable subscriber. That is one of the clearest signs that the architecture is paying rent.

The deployment model is more honest now

The old version had the appeal of one consolidated app. The new version is more explicit about what production already needed. Vercel serves the frontend SPA. Railway runs the API service. Railway also runs a separate Zero cache service. Postgres lives in Railway Postgres or Neon.

Yes, that is more moving parts. It is also a better match for the real runtime responsibilities. Static delivery, API handling, realtime sync, and durable storage are separate concerns. Pretending they are one concern only makes the app look simpler on paper.

So why is the new version better

The answer is not one tool. The new version has cleaner boundaries between UI, backend, contracts, schema, synchronization, and presence. Realtime behavior is based on state subscriptions instead of polling. The mutator and query model is easier to extend than a growing set of game-specific routes. Shared contracts reduce drift. Observability is stronger. Adding another game now has a better chance of fitting the same system instead of forcing a new one-off pattern.

But the refactor is not free

This is the part worth stating plainly. The new version is better. It is also more expensive to understand and operate.

More moving pieces

The old version was mostly a Next app plus Postgres. The new version is a web app, API service, shared package, Zero cache service, Postgres, a presence socket, and deployment wiring between all of them. That is real complexity.

Zero is another system to learn

Zero is not fetch with less code. It changes how queries, subscriptions, mutations, cache behavior, and client lifecycle work. That is good once the model clicks. It is still another architectural system you have to own.

Presence and realtime are now two channels

That split is the right design here, though it adds nuance. I now have to reason about Zero connectivity, presence socket connectivity, and the interaction between presence freshness and game state.

Infrastructure requirements are stricter

Local and production setup now need Docker-backed Postgres for development, a Zero cache process, wal_level=logical on Postgres, a direct upstream Postgres connection for Zero, and separate env vars for web, API, and Zero. This is manageable once documented. It is still heavier than a simple monolithic app.

Foundation quality is not the same as feature parity

The archived repo had more breadth in some places. It had more experiment-shaped features baked directly into the old structure. The refactor is more selective and more focused on foundation. That means the new version is stronger as a platform even when every old idea has not come over one-to-one yet. I think that is the right trade. It is still a trade.

Old vs new, in one table

| Area | games-arch | games-refac |

|---|---|---|

| App shape | One Next.js app | Split monorepo with web, api, shared |

| Frontend | Next.js client pages | React 19 + Vite SPA |

| Backend | Next route handlers | Hono Node service |

| Shared contract layer | Mostly implicit | First-class shared package |

| Realtime model | Polling | Zero subscriptions plus mutations |

| Presence | Game-specific heartbeat routes | Dedicated /presence WebSocket |

| API surface | Many per-game endpoints | Small service surface plus shared mutators |

| Deployment | Simpler single-app mental model | More explicit multi-service model |

| Debuggability | Mostly page and route level | Connection debug plus build-info plus service separation |

| Extensibility | Fast to hack | Better long-term structure |

The real lesson

The lesson is not that every app should be rewritten with more architecture. The lesson is narrower. A simple architecture stays simple only while the product still fits inside that shape.

games-arch was the right first version because it helped me find the real product and gameplay problems fast. games-refac is the right version now because the project needs a system that can survive more games, more shared state, more multiplayer edge cases, and more deployment reality.

This version feels better because the project moved from a working app with growing exceptions to a platform with explicit boundaries.

Closing

If I had to reduce the difference to one line, I would say this. The old version was easier to ship quickly. The new version is easier to trust.

For a multiplayer app with timers, room state, reconnects, and several game modes, that trust matters more than saving one more route file.

Repo: github.com/oyuh/games